The Fight Against Antisemitism on Twitter in France

Marc Knobel is director of research at CRIF – the Representative Council of French Jewish Institutions.

It is with great interest that I read the article that was published on the combatantisemitism.org website on July 1st. The Combat Antisemitism Movement (CAM) informed readers that Twitter had refused to delete the antisemitic tweet by former U.S. Congresswoman Cynthia McKinney about 9/11 and explained that Twitter’s rules-enforcement team rejected a request from it to remove the tweet, which featured a conspiracy graphic blaming “Zionists” for the terrorist attacks.

For a French reader like me, this article contained several useful pieces of information:

– We learn that a former member of Congress is using Twitter to post a tweet that we would describe as antisemitic and conspiratorial. Also in France, some politicians (both from the right and left wings) use this platform to disseminate sensitive, conspiratorial content.

– As we do in France, CAM reported this content to Twitter. On both sides of the Atlantic, we react in the same way. That is fortunate. However, there is a major difference between our two countries. Our laws allow us to take legal action against this platform, which we do. This is a significant difference.

– As CAM states, Twitter refuses to delete the reported Tweet from McKinney. We have here a pattern that we are also familiar with in France and in Europe. At Twitter, the deficiencies in moderation no longer need to be proved. They are the subject of a complex legal procedure that I will explain in this article.

I thus intend to come back to this issue and to explain how in France, we fight against antisemitism on Twitter.

Twitter and antisemitism

In France, we recall that on Twitter, the hashtag #agoodjew triggered a record number of antisemitic tweets that showed the resurgence of a particularly disturbing racism against Jews. This slip had been condemned by several associations that had taken Twitter to court to force the network to communicate, with the authorization of the judge, the data allowing the authors of racist and antisemitic tweets to be identified[1]. After a lengthy legal battle lasting months, the American social network had delivered data allowing the identification of certain authors of antisemitic tweets.

Another example: the “Jew traps” that flourished in Belgium and on other social networks. These were clichés that targeted Jews. For example, on a Facebook account a user had posted a picture found on Twitter. On this picture, we could see an oven with two bills that represents this “trap.” But it spread very quickly on Twitter. There were several similar pictures, often with an oven containing bills, sometimes with a box containing some coins. Several of these clichés were reported and removed by the social network, but new pictures more or less identical flourished immediately.

Twitter: Hate in 280 characters

On Twitter, features are very easy to use. You create an account in a few seconds. To do so, you can use any nickname. This nickname (or your last name) opens with the famous at sign: @. Then, you can subscribe to other accounts. After that, you can create a lot of followers, follow topics, discover popular tweets. For this purpose, there is the SEO “Trends for you.” These are the most popular topics (of the day).

But what happens if you search key words, whether popular or not? What can we find for example with the words “Nigger,” “Jew-boy”, and “Rag head” in January or February 2020? You will obviously find extremely racist, antisemitic, and homophobic tweets. In this case, what is incredible here is the extreme poverty of the language, the misery of the expression, the insults that flow so easily, often with a sexual connotation, the use of emoticons, etc. And yet, these are some of the tweets classified as popular. Why? Simply because they have been retweeted, commented on, or exchanged. No matter what the topic is. In this context, we find at least ten major characteristics of social networks:

– Simple. Basically, it’s so easy to make posts like these. There is absolutely nothing to block it.

– Anonymous is often the rule. Many users hide their real identity by using nicknames. Because they are anonymous, they feel untouchable.

– In the virtual world, internet users are unleashed. On Twitter, insulting is not a problem, nor is mocking. It goes as far as calling for murder.

– Mimicry is the rule. When violent, insulting, and defamatory messages are posted, others appear afterward and in even greater numbers. There is a domino effect, that of violence, without any limit.

– Violent messages are posted with great ease. Besides, who is there to answer? Few people, in fact. It takes courage to do so.

– Often, Twitter and other platforms become permanent people’s courts and certain topics go viral. In this universe, racism is easily fed. It is then an abundance of violent comments.

– Virality can be linked to immediate news. Some topics unleash passions (for example, the Yellow Vests). There is no room on Twitter and especially in 280 characters (which is not much) to post meaningful arguments. The reactions are rather impulsive, emotional, and characterized. Then, it’s time for free insults.

– The speed at which these messages are posted is an asset. The driving force, violence and hatred are spread on social networks at record speed. It is the time of instant action. The data is then archived.

– Moderation is insufficient.

– On the other hand, educating, deconstructing, takes a lot of time. We are not in the same space-time, that of publication and that of dismantling. And there lies the challenge.

– Twitter is (also) this horrible 280-character message waltz:

“Fucking Jew, you’re going to die”, “your mother died in the camps, you’re going to die”, “go fuck yourself fucking Jew”, “fucking faggot”, “woman, I rape you”, “I’m going to beat you fucking nigger”, “fuck France”, “I’ll fuck France until she loves me”, “You’re talking about the Armenians of 1915, they should have killed you all”, “something is burning, there’s a Jew next to me”, “speak French, fucking niger”, “…shut up, your French piece of shit, too bad you didn’t die at the Bataclan”, “fucking Muslim, we’ll slit your throat[2]…”

Insufficient moderation?

Like other platforms, Twitter has a feature that allows each user to report any Tweet (text, pictures, images) or profile in violation of these rules and terms of use and different categories have been listed, such as hateful conduct[3].

Different categories also appear, such as “glorification of violence,” “violent extremist groups,” “suicide or self-harm,” and sensitive content.

In fact, reports can be made directly from a given Tweet or profile for certain violations, including inappropriate or dangerous comments. In fact, if you click on “Report a problem,” six categories appear on the screen, including “I’m not interested in this Tweet” or “the comments made are inappropriate or dangerous.” If you precisely click on this last category, a selection automatically appears:

– The comments made are disrespectful or offensive.

– Private information is disclosed.

– Harassment is targeted.

– The comments incite hatred against a protected class (on the basis of race, religion, gender, sexual orientation, or disability).

– The user threatens to use violence and hurt someone.

– The user encourages self-harm or suicide.

If you click on the fourth tab of this list: “The comments incite hatred against a protected class…”, you are asked to explain who is the target of the account you are reporting: you, someone else or a group of people. Then and if possible, you are asked to add at least five Tweets to this report, to give it more strength. And you send everything. Then you receive a message: “If we find that this account violates the Twitter Rules, we will take appropriate action[4].” After a while, you will be notified whether or not the account has been deleted with your report. But, according to what criteria? The platform says it reviews accounts. The moderators would take the appropriate sanctions for violent threats; insults and slurs against categories of people and/or racist or sexist images; inappropriate content that demeans a person and denies their humanity. And finally, fear-mongering content.

While Twitter’s mechanism has improved in recent years, it still remains inadequate. According to a study mandated by the European Commission, Twitter is the worst platform when it comes to fighting online hate.

During a monitoring operation carried out between January 20 and February 28, 2020, on a sample of 484 hateful contents on four platforms (YouTube, Facebook, Instagram, Twitter), the organization sCAN[5] (Specialised Cyber-Activists Network) found that only 58% of them had been removed over the period. Twitter had the worst results: only 9% of the reported problematic tweets had been removed by the platform and 5% had been made invisible to users in the geographical area. YouTube did slightly better, with 26% of content removed[6].

Another study was conducted from March 17 to May 5, 2020, by the Union of French Jewish Students (UEJF), French associations SOS Racisme and SOS Homophobie. It shows a 43% increase in the rate of hateful content posted on Twitter. In more detail, the number of racist content has increased by 40.5%, antisemitic content by 20%, and LGBT-phobic content by 48%.

In fact, the UEJF, SOS Racisme, SOS homophobie, and the association J’accuse…! summoned Twitter on Monday, May 11, 2020, before the Court of Paris, judging that it failed in a “long and persistent” way to its obligations in terms of content moderation[7]. The parties had since initiated a mediation that failed, bringing the case back to court.

After the failure of the mediation undertaken with Twitter, several anti-racist associations have decided to take the social network to court for its inaction in dealing with hate comments. The Union of French Jewish Students (UEJF), SOS Racisme, Mrap, Licra, J’accuse, and SOS Homophobie believe that the platform is failing in its obligations to moderate content. The associations requested in summary proceedings (emergency) that an expert be appointed in order to know what are the “human and material means” devoted by the company to the moderation with the aim of initiating, thereafter, another trial on the merits.

This procedure aims “to understand what happens behind the screen when a problematic tweet is reported,” pleaded Stéphane Lilti, attorney for the UEJF. The organizations rely on three observations made by bailiffs at their request in 2020 and 2021. The more recent one, “of the 70 tweets reported,””50 remained online.”

“One thing is for sure, it does not work” and these “drifts” of moderation are aimed at the usual victims of racism, antisemitism, and homophobia. We have the right to consider that this must change,” said Stéphane Lilti.

The associations rely on the French law of 2004 called “for confidence in the digital economy” (LCEN), which requires platforms to “contribute to the fight” against online hate and in particular to “make public the means they devote to the fight against these illicit activities.”

A recent investigation…

The results of a recent investigation confirm the data cited above. Since 2019, the Online Antisemitism Observatory of the CRIF, in collaboration with IPSOS, has been collecting antisemitic content on the internet, thanks to a list of keywords, from over 600 million sources. Among the categories studied were “hatred of Jews via hatred of Israel.”

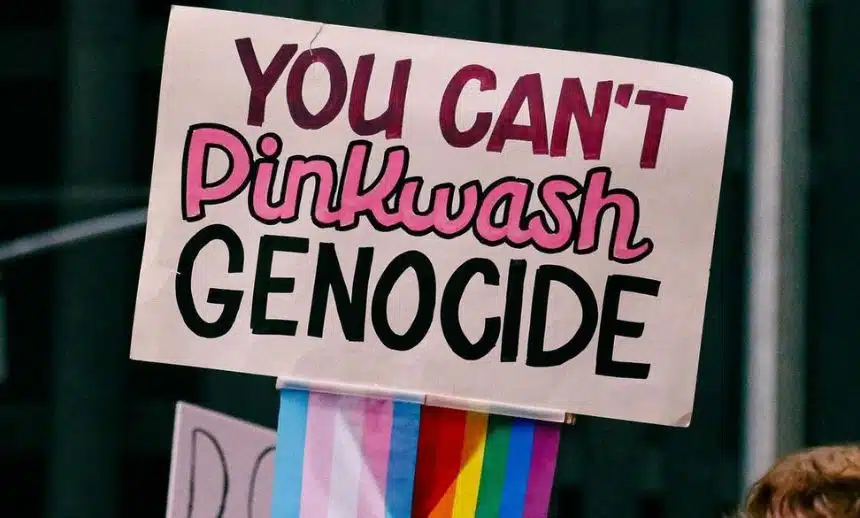

Of particular concern are: defamation and criticism of Israel because it is a Jewish state; the use of symbols and images associated with classical antisemitism to characterize Israel or Zionism; allegations that the State of Israel is comparable to Nazism; and comparisons of Israel’s current policies with those of the Nazis.

What are the conclusions? 51,816 antisemitic pieces of content were counted in 2019 (63% on Twitter, 17% on Facebook, 3% on the International Network.net, 2% on YouTube), with a strong increase in hatred toward Israel, over the first half of 2019 accounting for 79%. For the year, this category constitutes 39% of the antisemitic comments on the internet[8].

Twitter must account for its moderation features as ordered by the Court of Paris

The following conclusions can be drawn from this short study.

Twitter has to obey the laws of the market, which consider that the platform does not bring in enough money. In fact, Twitter has no alternative but to let the growth of its users develop fast, quickly and without barriers.

As a matter of fact, on July 6, 2021, the Court of Paris has just ruled its conclusions in the case that brought against Twitter by six associations, including J’accuse over which that I preside.

The court’s decision is very important and is a world premiere:

“…Orders TWITTER INTERNATIONAL COMPANY to disclose to the plaintiffs and voluntary intervenors within two months of service of this ruling, on the period between the date of issuance of the summons, i.e., 18 May 2020, and the date of entry of this order:

– any administrative, contractual, technical, or commercial document relating to the material and human means implemented within the framework of the Twitter service to combat the dissemination of offenses of apology for crimes against humanity, incitement to racial hatred, hatred towards persons because of their sex, sexual orientation or identity, incitement to violence, in particular incitement to sexual and sexist violence, as well as offenses to human dignity,

– the number, location, nationality, language of the persons assigned to process reports from users of the French platform of its online public communication services,

– the number of reports from users of the French platform of its services, regarding apology for crimes against humanity and incitement to racial hatred, the criteria and the number of subsequent withdrawals…”

Twitter is going to have to be accountable and explain these features, and finally stop dodging around. As far as we are concerned, we affirm that the only solution to remedy the various deficiencies observed would result from a very significant improvement of the services and a significant increase in the number of moderators, as well as a significant policy for dealing with online hate. As far as we are concerned, we continue the fight.

In addition to his current role as director of research at CRIF, Knobel is a former researcher at the Simon Wiesenthal Center and was also Vice President of the International League Against Racism and Antisemitism. An expert in antisemitism, terrorism, and extreme right-wing movements, he has published numerous books and articles, including most recently “Cyberhate. Antisemitism and Propaganda on the Internet” (Hermann Publishing).

As a specialist of the issue of extremism on the internet, he advised the Council of Europe, the French Parliament, and the United Nations. He has given lectures at the National School for Judges in Paris (Ecole Nationale de la Magistrature de Paris) and has been a rapporteur for the Consultative Commission on Human Rights (Commission Nationale Consultative des Droits de l’Homme) since 2004.

[1] On 24 January 2013, the Superior Court of Paris ordered Twitter to provide the competent authorities with data that would identify the authors of antisemitic tweets. Furthermore, the Superior Court ordered that an accessible and visible system be set up on the French platform of this service, allowing any person to bring to its attention illicit content subject to the apology of crimes against humanity and incitement to racial hatred. On 12 July 2013, Twitter indicated that it had provided the French justice system with data likely to allow the identification of some authors of antisemitic tweets.

[2] Marc Knobel, “Twitter, love, hate…”, Huffington Post, 5 April 2016.

[3] Hateful conduct is defined as follows: “You may not promote violence against or directly attack or threaten other people on the basis of race, ethnicity, national origin, caste, sexual orientation, gender, gender identity, religious affiliation, age, disability, or serious disease. We do not allow accounts whose primary purpose is inciting harm towards others on the basis of its username, display name or profile bio. If an account’s profile information includes a violent threat or multiple racist or sexist slurs, qualifiers and clichés, incites fear, or denies a person’s humanity, that account will be permanently suspended.”

[4] In July 2020 and in the midst of the Coronavirus outbreak, Twitter warns that the unprecedented public health emergency they are facing has impacted the availability of their support team. “We focus on potential violations that could be harmful, and we work to make it easier for people to report them”.

[5] sCAN aims to bring together expertise, tools, methodology and knowledge on cyber hate and develop global transnational practices to identify, analyze, report and combat online hate speech.

[6] Guillaume Guichard, “Twitter, the worst student in the fight against online hate,” Le Figaro, 3 July 2020.

[7] https://uejf.org/2020/05/12/luejf-assigne-twitter-en-justice-pour-son-inaction-

[8] See on this subject Marc Knobel, “La détestation d’Israël sur le Net”, La Revue des Deux Mondes, October 2020, pp. 58-62.

read more

Join Our Newsletter

Free to Your Inbox

"*" indicates required fields